Artificial intelligence (AI) is revolutionizing our world, and software development is no exception. AI-powered coding tools are generating lines of code at lightning speed, promising increased efficiency and productivity. But amidst the automation boom, a critical question emerges: can we trust AI to secure the very code it writes?

While AI holds immense potential for streamlining development, relying solely on its black-box algorithms for security can be a recipe for disaster. Just as AI can build complex bridges, it can also unwittingly leave cracks for vulnerabilities to exploit. In this digital age, where cyberattacks are becoming increasingly sophisticated, a single security loophole can compromise entire systems and devastate businesses. So, why should we still prioritize human involvement in securing AI-generated code? Here are four compelling reasons:

1. The Limits of AI Understanding

AI excels at pattern recognition and churning out vast amounts of code within defined parameters. However, it lacks the crucial human element of critical thinking and context awareness. AI doesn't understand the nuances of user behavior, potential attack vectors, or the broader ecosystem in which the code will operate. This limited understanding can lead to vulnerabilities that even rigorous testing might miss.

2. The Bias Blind Spot

AI algorithms are trained on datasets created by humans, and those datasets often carry unintended biases. These biases can inadvertently creep into the code, introducing potential security risks. For example, an AI trained on biased data might prioritize security for certain user groups over others, creating vulnerabilities for the less-protected groups. Human oversight is essential to identify and mitigate such biases before the code goes live.

3. The Creativity Gap

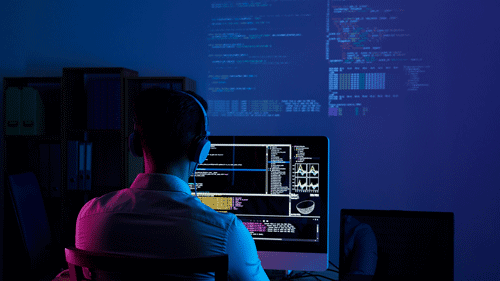

Cybercriminals are constantly innovating and devising new ways to exploit vulnerabilities. To stay ahead of this cat-and-mouse game, we need creative solutions that AI, in its current state, struggles to offer. Humans, with their diverse perspectives and ability to think outside the box, can conceive of unique security measures that outsmart malicious actors. Carbonetes’ team loves exploring the potential of AI but, at the same time, integrating vulnerability expertise where us, humans excel.

4. The Importance of Explainability

In today's increasingly transparent world, accountability for security flaws is paramount. When something goes wrong, we need to understand why and how it happened. Unfortunately, AI's decision-making processes are often shrouded in a veil of complexity. Humans, on the other hand, can explain their reasoning and thought processes, providing invaluable insights for improving future security practices.

So, how can we harness the power of AI while ensuring the secure development of code? The answer lies in a synergistic approach that combines the efficiency of AI with the vigilance and intelligence of humans. Here are some key strategies:

- Human-in-the-loop development: AI tools should be used as assistants, not replacements, for human developers. Humans should always review and adjust AI-generated code, ensuring it aligns with security best practices and project requirements.

- Security education and training: Developers need to be equipped with the knowledge and skills to identify and mitigate security vulnerabilities in AI-generated code. Regular training programs and awareness campaigns are crucial to building a security-conscious development culture.

- Robust testing and validation: Even with human oversight, rigorous testing and validation processes are essential for catching any remaining vulnerabilities. Automated testing tools can be combined with manual penetration testing to ensure comprehensive security assessment.

- Transparency and explainability: AI developers should strive to make their algorithms more transparent and explainable. This allows for a better understanding of potential biases and facilitates collaboration between humans and AI in securing the code.

By embracing this collaborative approach, we can unlock the full potential of AI in software development while safeguarding against lurking security threats. Remember, in the world of code, it's not just about speed and efficiency; it's about building strong, secure systems that can withstand the challenges of the digital age. And for that, the human firewall remains an indispensable line of defense.

3 Comments

This is a refreshing take! Emphasizing collaboration between AI and humans could really strengthen our security practices moving forward.

Such an important conversation! Understanding in AI is important, we need to understand its conclusions if we're going to trust it with our code.

Absolutely agree about the bias issue! It’s crucial we keep an eye on how AI can unintentionally favor certain groups. Human oversight is definitely needed.